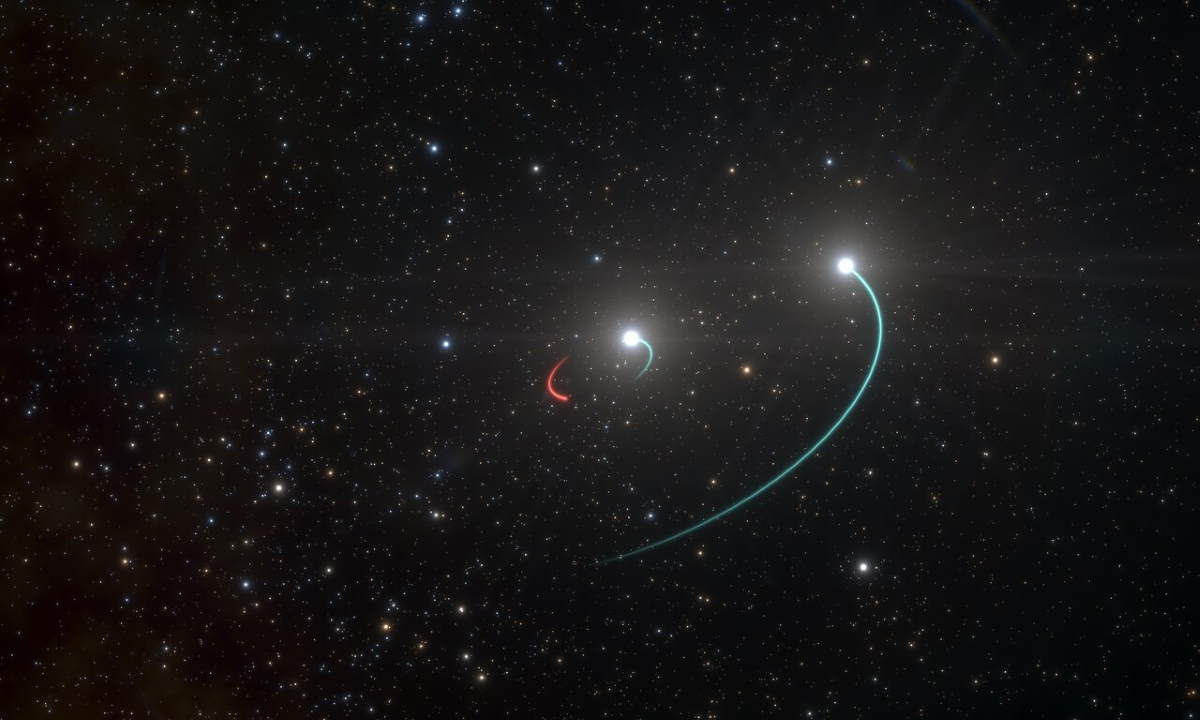

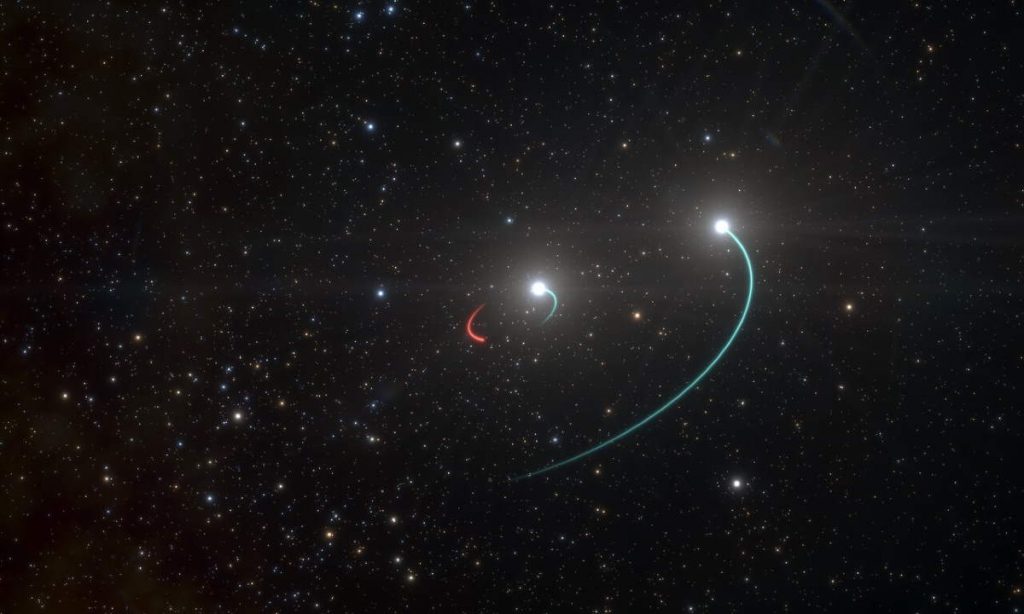

Well, of course, you cannot see a black hole – but the newly discovered one has two stars orbiting it, and you can see these companion stars even with the naked eye from Earth. The triple system is named HR 6819. Named QV Tel Ab, the inner component of the system is the closest black hole to Earth.

The system appears as a variable star that is dimly visible to the naked eye.

HR 6819 (QV Telescopii), the closest black hole (known so far)

Previously considered a single star, the multiplicity of HR 6819, also known as HD 167128 or QV Telescopii was discovered through radial velocity measurements in 2020, which suggested the presence of an unseen stellar-mass black hole within the system. The system is 1,120 light-years from the Sun, so the “unseen” component is the closest black hole to Earth so far discovered.

The three components are:

- QV Tel Aa: Termed Aa, the main, inner stellar component is a B3 III blue giant star. It has a mass of approximately 6 solar masses. It and the black hole form a binary with a period of 40.3 days.

- QV Tel B: The second, outer stellar component termed as B is a Be star with a stellar classification of B3IIIpe. The ‘e’ suffix indicates emission lines in its spectrum. It is a rapidly rotating blue-white star with a hot disk of decreted gas surrounding it (a decretion disk is material around a star or other massive astronomical body distributed in the shape of a disk centered on the body, consisting of material ejected by the body).

- QV Tel Ab, black hole: Radial velocity measurements of the inner component in 2020 suggested the presence of a massive unseen companion, which is hypothesized to be a black hole. Being 1,120 light-years distant from the Sun, this would make it the closest black hole to the Sun (so far) and the first and only known black hole system visible with the naked eye at 5.36 apparent magnitude, making it one of the 2,000 brightest stellar systems. The black hole itself is not visible and it also does not interact with its companion stars to form an accretion disk.

Science Release: ESO Instrument Finds Closest Black Hole to Earth

The European Southern Observatory website announced on May 6, 2020, that:

“A team of astronomers from the European Southern Observatory (ESO) and other institutes has discovered a black hole lying just 1000 light-years from Earth. The black hole is closer to our Solar System than any other found to date and forms part of a triple system that can be seen with the naked eye. The team found evidence for the invisible object by tracking its two companion stars using the MPG/ESO 2.2-meter telescope at ESO’s La Silla Observatory in Chile. They say this system could just be the tip of the iceberg, as many more similar black holes could be found in the future.”

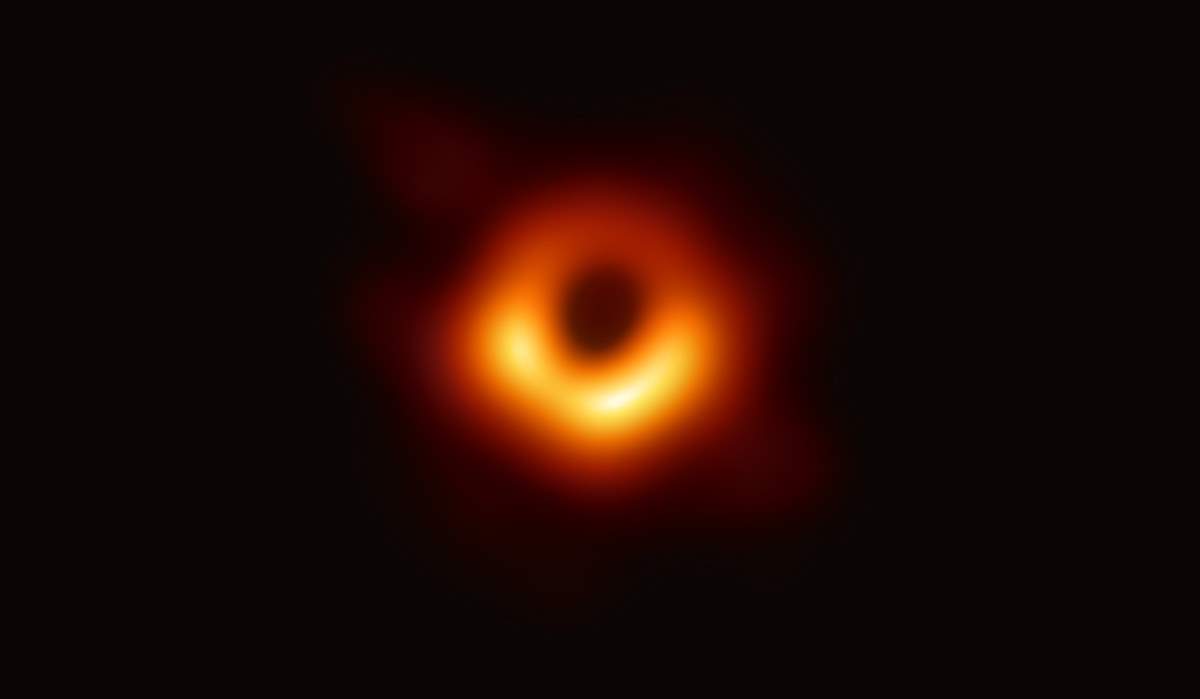

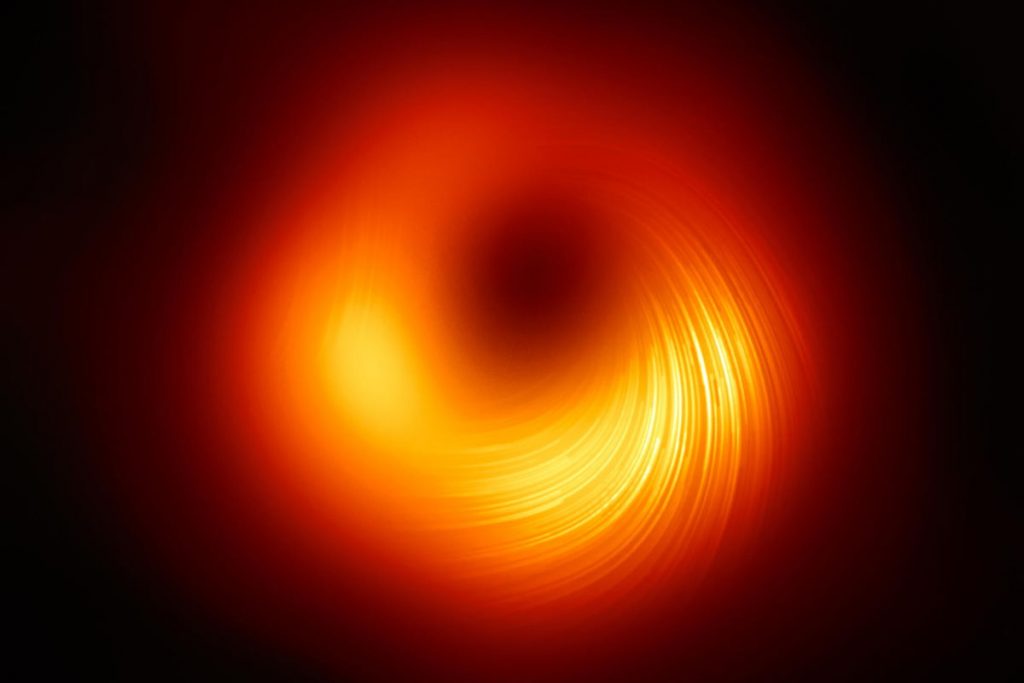

Related: The First-Ever Image of a Black Hole

“We were totally surprised when we realized that this is the first stellar system with a black hole that can be seen with the unaided eye,” says Petr Hadrava, Emeritus Scientist at the Academy of Sciences of the Czech Republic in Prague and co-author of the research. Located in the constellation of Telescopium, the system is so close to us that its stars can be viewed from the southern hemisphere on a dark, clear night without binoculars or a telescope. “This system contains the nearest black hole to Earth that we know of,” says ESO scientist Thomas Rivinius, who led the study published today in Astronomy & Astrophysics.

The team originally observed the system, called HR 6819, as part of a study of double-star systems. However, as they analyzed their observations, they were stunned when they revealed a third, previously undiscovered body in HR 6819: a black hole. The observations with the FEROS spectrograph on the MPG/ESO 2.2-meter telescope at La Silla showed that one of the two visible stars orbits an unseen object every 40 days, while the second star is at a large distance from this inner pair.

Dietrich Baade, Emeritus Astronomer at ESO in Garching and co-author of the study, says: “The observations needed to determine the period of 40 days had to be spread over several months. This was only possible thanks to ESO’s pioneering service-observing scheme under which observations are made by ESO staff on behalf of the scientists needing them.”

The hidden black hole in HR 6819 is one of the very first stellar-mass black holes found that do not interact violently with their environment and, therefore, appear truly black. However, the team could spot its presence and calculate its mass by studying the orbit of the star in the inner pair. “An invisible object with a mass at least 4 times that of the Sun can only be a black hole,” concludes Rivinius, who is based in Chile.

Astronomers have spotted only a couple of dozen black holes in our galaxy to date, nearly all of which strongly interact with their environment and make their presence known by releasing powerful X-rays in this interaction. But scientists estimate that, over the Milky Way‘s lifetime, many more stars collapsed into black holes as they ended their lives.

The discovery of a silent, invisible black hole in HR 6819 provides clues about where the many hidden black holes in the Milky Way might be. “There must be hundreds of millions of black holes out there, but we know about only very few. Knowing what to look for should put us in a better position to find them,” says Rivinius. Baade adds that finding a black hole in a triple system so close by indicates that we are seeing just “the tip of an exciting iceberg.”

Already, astronomers believe their discovery could shine some light on a second system. “We realized that another system, called LB-1, may also be such a triple, though we’d need more observations to say for sure,” says Marianne Heida, a postdoctoral fellow at ESO and co-author of the paper.

“LB-1 is a bit further away from Earth but still pretty close in astronomical terms, so that means that probably many more of these systems exist. By finding and studying them we can learn a lot about the formation and evolution of those rare stars that begin their lives with more than about 8 times the mass of the Sun and end them in a supernova explosion that leaves behind a black hole.”

The discoveries of these triple systems with an inner pair and a distant star could also provide clues about the violent cosmic mergers that release gravitational waves powerful enough to be detected on Earth. Some astronomers believe that the mergers can happen in systems with a similar configuration to HR 6819 or LB-1, but where the inner pair is made up of two black holes or of a black hole and a neutron star.

The distant outer object can gravitationally impact the inner pair in such a way that it triggers a merger and the release of gravitational waves. Although HR 6819 and LB-1 have only one black hole and no neutron stars, these systems could help scientists understand how stellar collisions can happen in triple-star systems.

You can see the full announcement here.

Sources

- ESO Instrument Finds Closest Black Hole to Earth on the European Sothern Observatory website

- HR 6819 on Wikipedia

- Study: A naked-eye triple system with a non-accreting black hole in the inner binary on the European Sothern Observatory website

- How Many Elephants are Left in the World in 2025? - August 17, 2025

- Moon Landings: All-Time List [1966-2025] - February 2, 2025

- What Is Max-Q and Why Is It Important During Rocket Launches? - January 16, 2025